Opus 4.7 dropped last week. Lots of excitement. Then the other shoe dropped. I ran identical coding tasks against both Opus 4.6 and 4.7 to see if the capability improvements justify the cost increase. Both models passed all 10 tests. The quality difference is real — but Opus 4.7 consumed 3.6× more tokens and cost 3.6× more for the same outcome.

That’s not a typo. Same task, same success rate, nearly 4× the cost. I have the receipts.

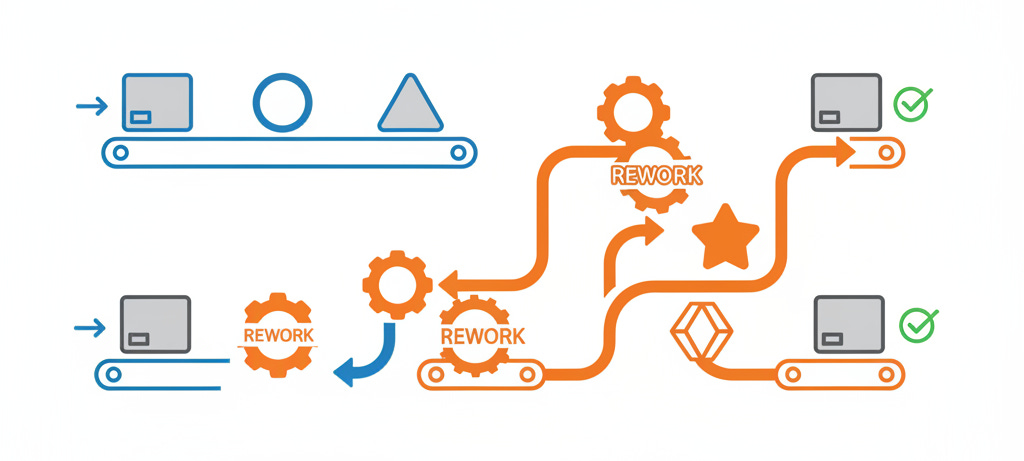

Anthropic says “pricing unchanged” because the per-token rates stayed the same. What they don’t mention is that Opus 4.7 systematically burns through more tokens to complete identical work. The model writes, then revises. Opus 4.6 writes correctly the first time. Both approaches work. Only one bills you for the revision process.

TL;DR

Both models passed 10/10 tests: Quality improvements are measurable but incremental (better typing, more thorough code)

3.6× cost increase for identical outcomes: $0.38 vs $1.38 for a 30-minute coding task in controlled testing

Token consumption drives cost, not capability: Opus 4.7’s iterative working style consumes 2.9× more output tokens per task

Agentic mode required: One-shot testing shows 4.7 fails 9/10 tests without tool access, while 4.6 passes perfectly

Per-token rates unchanged, real bill moved anyway: 4.7 burns 4.8× more cache tokens per task — the rate card stays flat, your invoice doesn’t

The Controlled Test

Last week I ran a head-to-head benchmark using the same Level 1 coding task for both models: build a complete Python CLI tool (Markdown Table Formatter) from scratch with full test coverage.

Test setup:

Framework:

claude_agent_sdkv0.1.64, full agentic modeModels:

claude-opus-4-6vsclaude-opus-4-7Success criteria: Pass all 10 provided pytest tests

Execution: Concurrent runs with identical prompts

Both models succeeded. The difference was entirely in how they got there.

The Numbers

Metric Opus 4.6 Opus 4.7 Ratio Wall clock time 114.8s 259.1s 2.3× slower Agent turns 17 23 35% more Output tokens 6,384 18,289 2.9× more Cache read tokens 215,853 1,034,165 4.8× more Total cost $0.38 $1.38 3.6× more expensive

The Behavioral Fingerprint

The tool usage patterns reveal why 4.7 costs more:

Tool Opus 4.6 Opus 4.7 Write 6 7 Bash 6 9 Read 4 1 Edit 0 5

Opus 4.7 made 5 Edit calls to revise files after writing them. Opus 4.6 made zero — it wrote all 6 source files correctly in a single pass, ran pytest once, passed 10/10 tests.

The cache token burn (4.8× more) suggests 4.7 does extended internal reasoning between each tool call. It’s thinking harder, which shows up in better code quality — more type hints (35 vs 25 function definitions), more thorough coverage (820 vs 471 lines of code). But you pay for that thinking process.

Quality vs Cost Trade-off

The output quality difference is genuine. Opus 4.7’s code was more defensively written — better typed, more thorough on edge cases. When you’re in the middle of debugging a genuinely hairy distributed system problem or making an architectural call with real downstream implications, that extra care is worth something.

But for this Level 1 coding challenge, both approaches delivered identical functionality. The question becomes: is 40% better typing and 74% more comprehensive coverage worth 260% higher costs?

When the Premium Isn’t Optional

I tested both models in one-shot mode (no tools, single response) to see if you could avoid the iterative cost overhead.

Metric Opus 4.6 Opus 4.7 Output tokens 4,725 23,907 Cost $0.27 $0.94 Tests passed 10/10 1/10

Opus 4.7 failed catastrophically without tool access. It generated 5× more tokens but couldn’t follow output format instructions — most files were unparseable and 9 of 10 tests failed. Opus 4.6 passed perfectly on the first attempt.

This reveals a structural dependency: Opus 4.7’s quality advantages require the full agentic feedback loop. You can’t switch to a cheaper execution mode to control costs. The iterative self-correction that makes it better is also what makes it expensive — there’s no cheaper version of how this model works.

Scale It Out

Scale these numbers to something realistic:

100 equivalent coding tasks per day:

Opus 4.6: ~$38/day → ~$13,870/year

Opus 4.7: ~$138/day → ~$50,370/year

Annual cost increase: +$36,500

This matches what production teams are reporting. The Finout analysis documented overnight cost jumps from $500 to $675/day after deploying 4.7. My testing provides a mechanistic explanation: the model’s working style is token-intensive by design.

The cost increase compounds with Anthropic’s separate tokenizer changes that increase consumption up to 35% for identical prompts. You get hit twice: more tokens per task, plus each token costs more to count.

This Is Anthropic’s Move, Not the Industry’s

Other providers aren’t doing this. GPT-5.4 achieves comparable benchmark performance without the tokenizer change. Anthropic can pull this off because they’re ahead on benchmarks right now — that’s the advantage, and they’re using it.

Which means this is actually a model selection problem, not a budget problem.

I’m not upgrading my agentic workflows to 4.7 by default. For complex architectural work where the reasoning depth matters — distributed systems debugging, refactoring decisions with downstream implications — yes, 4.7 earns the premium. For routine code generation, test writing, documentation? 4.6 passes the same tests at a quarter of the cost, as I just demonstrated.

Sonnet is even more aggressive on cost for work that doesn’t need Opus-level reasoning at all. I’ve been pushing more of my day-to-day agentic tasks there.

GPT-5.4 is worth keeping in rotation too. Comparable coding benchmark performance, no tokenizer games, and the competitive pressure helps if you ever need to push back on Anthropic pricing.

The Reddit community caught the tokenizer changes within hours of release while Anthropic’s communications stayed focused on “unchanged pricing.” That’s the early warning system. Watch community cost reports when a new model drops, not the vendor announcement.

Anthropic will keep doing this as long as they’re leading. The way you stay ahead of it is knowing your actual token consumption per task — not the rate card, the real burn — and routing work to the cheapest model that gets the job done.

Bob Matsuoka is CTO of Duetto and writes about AI-powered engineering at HyperDev.

Related reading:

AI Power Ranking — Tool comparisons and benchmarks for AI practitioners

LinkedIn Newsletter — Strategic AI insights for CTOs and engineering leaders

The 4.7 Edit-loop vs 4.6 one-pass is the core split. Did you try pushing up 4.6's thinking budget to see if the quality gap closes before the cost gap matters?