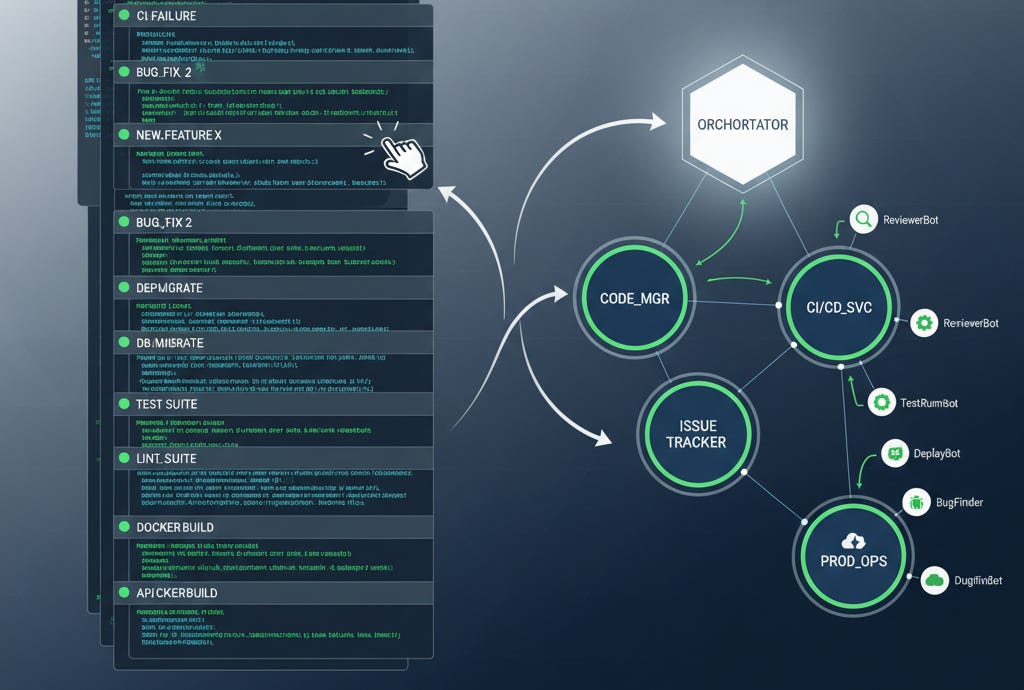

I’m looking at my (iTerm) terminal right now. Ten tmux sessions. Each session holds a different project context—one monitoring CI failures, another handling a code review, a third debugging a production issue.

This is the reality of modern agent development work.

TL;DR

Multi-session reality: Power users average 8-12 terminal sessions; most work involves modification, bug response, and PR handling—not new code generation

Natural workflow origination: Future systems trigger from product team actions, CI failures, and automated events rather than human prompts

Orchestration evolution: From human-orchestrated agents to orchestration-of-orchestrators where prime coordinators are non-human

Production examples: Stripe’s Minions (1,300 PRs/week), GitLab’s Duo Agent Platform, Meta’s REA demonstrate hierarchical agent orchestration

Architecture shift: Claude Code’s SDK model enables workflow-driven development through persistent, context-aware agent orchestration

The 10-Tab Reality

According to recent developer workflow studies, tmux has become the standard for AI-assisted development, with persistent sessions solving the context-switching tax. The productivity advantage isn’t the multiplexing—it’s the persistence. Projects become environments you step in and out of rather than things you open and close.

But here’s what the productivity tutorials miss: most of those tabs aren’t generating software.

My current session breakdown:

3 sessions: non-coding -- my CTO knowledge base (currently analyzing our Sumo use), a writing assistant, and our Duetto product management framework

4 sessions: coding - various internal tools and MCP connectors

2 sessions: coding - new projects

1 session: code review

The 8:2 ratio holds across most senior developers I’ve observed. Most development work involves responding to existing systems, not creating new ones.

This distribution points toward something significant: the future of development orchestration isn’t human-initiated.

Beyond Prompt-Driven Development

Claude Code’s new SDK architecture reflects this reality. Instead of starting with human prompts, work originates from natural workflow events:

Product team creates ticket → Implementation specification generated

CI pipeline fails → Diagnostic agent analyzes failure, proposes fix

PR submitted → Review agent examines code, suggests improvements

Production alert triggered → Incident response agent investigates, documents findings

Security scan detects vulnerability → Remediation agent generates patch

The pattern: Event → Agent Response → Human Review → Autonomous Resolution.

Humans remain in the loop, but as orchestrators and validators rather than initiators. The shift from “What should I build?” to “How should this system respond?”

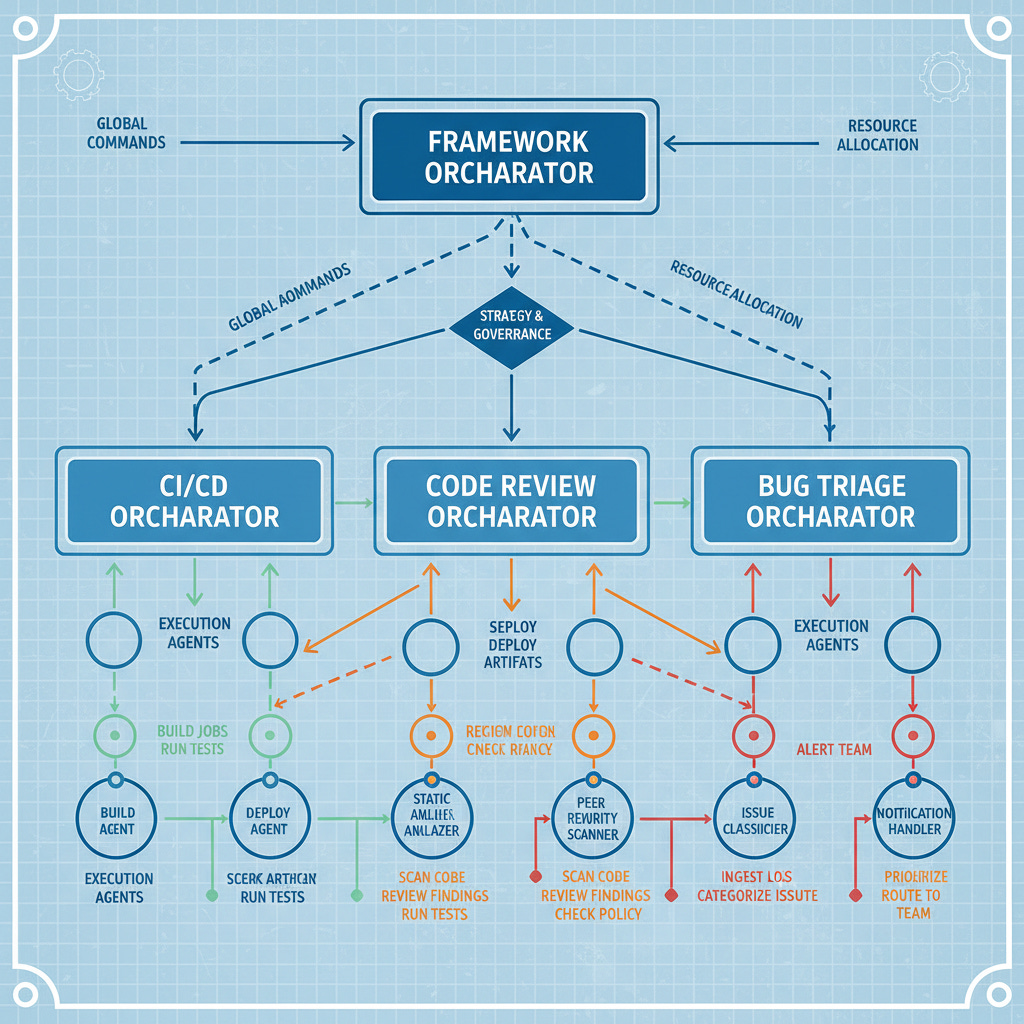

Orchestration of Orchestrators: Production Examples

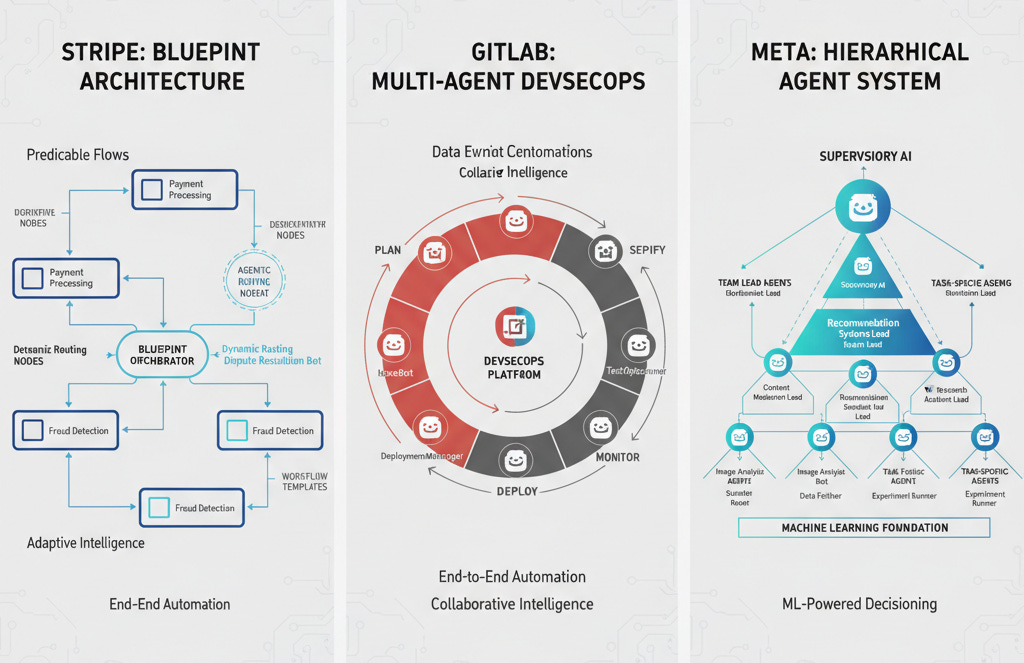

Stripe’s Blueprint Architecture

Stripe’s Minions system demonstrates mature orchestration-of-orchestrators. Their “blueprint” pattern alternates between deterministic code nodes and agentic reasoning loops, generating 1,300+ pull requests weekly.

Architecture insight: Each blueprint functions as a strict contract between orchestration and execution. Task definitions specify input requirements, output formats, constraints, and success criteria. The orchestrator manages workflow, agents handle implementation.

Security model: Every Minion execution runs in isolated VMs with no internet or production access. The system has submission authority but not merge authority—all changes require human review.

GitLab’s Intelligent Orchestration

GitLab’s Duo Agent Platform treats agents as durable actors that plan, modify code, fix pipelines, and enforce security with traceability. Multiple AI agents handle parallel tasks—code generation, testing, CI/CD fixes—while developers maintain oversight through defined rules.

Orchestration insight: GitLab positions itself as an AI orchestration plane where humans and agents share delivery responsibility. The platform coordinates multi-agent workflows across the entire software lifecycle rather than providing isolated AI tools.

Meta’s Hierarchical Agent Systems

Meta’s Ranking Engineer Agent (REA) demonstrates autonomous ML lifecycle management. REA Planner and REA Executor components, supported by shared skill and knowledge systems, autonomously evolve ads ranking models at scale.

Acquisition significance: Meta’s $2B Manus acquisition focused on orchestration infrastructure rather than foundation models. Manus’s achievement was engineering an execution layer enabling models to browse, code, manipulate files, and complete multi-step workflows autonomously.

The Architecture Implications

Beyond the Single-Agent Model

The production examples reveal a consistent pattern: successful autonomous development requires hierarchical orchestration rather than monolithic AI assistants.

Traditional approach: Human → Single Agent → Code

Emerging pattern: Event → Orchestrator → Specialized Agents → Validation → Resolution

Context Preservation at Scale

The tmux paradigm of persistent sessions maps directly to agent orchestration. Instead of recreating context for each interaction, systems maintain ongoing project understanding across multiple concurrent workflows.

Implementation insight: iTerm2’s tmux integration (-CC mode) provides the UI pattern for agent orchestration—persistent remote workspaces with native interface feel. The same architecture principles apply to agent coordination.

Where This Leads

Non-Human Prime Orchestrators

The logical endpoint isn’t humans managing multiple agents—it’s orchestrating systems that manage agent ecosystems. According to Gartner’s 2025 Agentic AI research, nearly 50% of surveyed vendors identified AI orchestration as their primary differentiator.

Pattern emergence: Meta-agents or orchestrator-generalists will control specialized agents, assign tasks, interpret results, and revise goals in real-time. Hierarchical orchestration becomes essential for enterprise-scale implementations.

The Developer Role Evolution

Instead of managing 10 terminal sessions, a framework orchestrates autonomous workflows. Each workflow maintains its own context, responds to its own triggers, and escalates to human attention when required. Some of those will be human/experimentation/new development driven, the majority will be responding to the automated lifecycle.

Skills that matter:

Workflow boundary definition: Which autonomous streams can operate independently?

Escalation criteria design: When do workflows require human intervention?

Cross-workflow dependency management: How do autonomous streams coordinate?

Quality gate enforcement: What validation must occur before autonomous resolution?

Implementation Considerations

Teams experimenting with orchestrated autonomous development should consider:

Event-driven architecture: Which existing workflows could trigger autonomous responses?

Context preservation systems: How will agent workflows maintain project understanding?

Isolation and security: What boundaries prevent autonomous agents from causing damage?

Human oversight integration: Where do human validation points occur in autonomous workflows?

Cross-workflow coordination: How do parallel autonomous streams avoid conflicts?

The transition from 10-tab manual orchestration to autonomous lifecycle orchestration isn’t theoretical. Stripe, GitLab, and Meta demonstrate production implementations. The question becomes implementation timeline and organizational readiness.

Early adopters are discovering that the competitive advantage comes not from having the smartest individual AI agents, but from orchestrating networks of specialized agents that collaborate effectively at scale.

Bob Matsuoka is CTO of Duetto and writes about AI-powered engineering at HyperDev.

Related reading:

Stripe’s Minions: Inside Their Enterprise AI Coding Agent Strategy — Blueprint orchestration architecture and production metrics

GitLab Duo Agent Platform — Intelligent orchestration across software lifecycle

Tmux Complete Guide: AI-Powered Multi-Agent Workflows — Terminal multiplexing for autonomous development

AI Power Ranking — Tool comparisons and benchmarks for AI practitioners

LinkedIn Newsletter — Strategic AI insights for CTOs and engineering leaders